This post originally appeared in my column for The Drum on March 25. It has been reproduced here with permission.

Liz Roche has sat in rooms where two people from the same brand have completely different views on what their campaign metrics should look like. It's one reason she thinks transparency matters more than standardisation — if the buyers themselves can't agree on what they want measured, the least a retailer can do is show its working.

Roche is vice president of media and measurement at Albertsons Media Collective, and her team is among the authors of new research that shows exactly why that transparency is overdue. Across 42 real ad campaigns, the same media, the same audience, the same creative, and the same spend produced incremental ROAS figures that varied by an average of 6.5x — and a median of 2.5x — depending solely on how the measurement was done. In 83% of those campaigns, the result could flip from positive to negative based on methodology alone.

The white paper, titled iROAS Demystified, is a collaboration between Albertsons Media Collective, Ovative Group, and professors from Northwestern University's Kellogg School of Management. It follows last year's ROAS Demystified paper, which unpacked the limitations of standard return on ad spend. This time, the team turned their attention to iROAS — the metric the industry has been championing as the more rigorous alternative — and found that it carries its own hidden variability.

The paper isn't arguing that iROAS is broken. It's arguing that the same label gets slapped on meaningfully different calculations, and that most brands don't know enough about what's happening under the hood to interpret results with confidence.

"We wanted to explore how different methodologies produce different results," Roche told me. "We know that there's a new retail media network cropping up every day. And understanding what's really working has become more and more challenging."

SPONSOR: MIRAKL ADS

Retailers know that a marketplace model can dramatically boost product assortment, shopper engagement, and total revenue.

But, to get the most out of your marketplace, you need an ad tech solution that can really engage sellers.

Mirakl Ads is powering the future of retail media for leading retailers — to activate both 3P sellers and 1P brands.

What the research tested

The study took 42 onsite display campaigns across Albertsons' web properties and varied four methodological choices that any retail media network faces when calculating iROAS:

- How the test and control groups are filtered before matching

- Which matching approach is used (clustering versus propensity score matching)

- What data features inform the match (whether past brand sales are included, for example)

- How incremental revenue is calculated between the two groups (observed sales versus a Bayesian time-series model or BSTS).

Those four choices, combined, produced 54 distinct iROAS values per campaign — without changing anything about how the campaign actually ran.

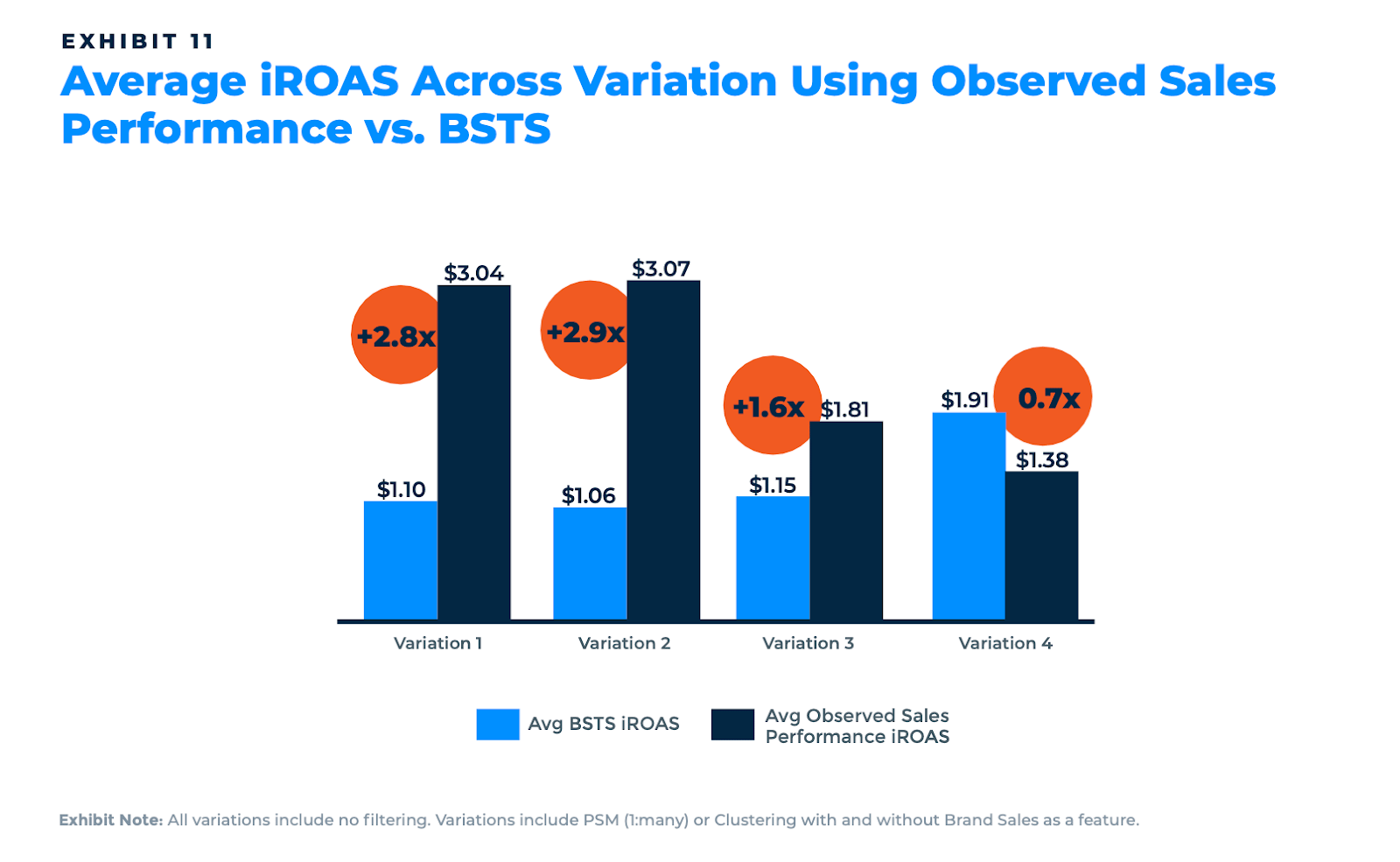

Some specific findings stand out. Propensity score matching produced roughly 12 times better match quality than clustering, as measured by standard mean difference across covariates. But it also tended to produce lower iROAS estimates. Whether or not historical brand sales were included as a matching feature could swing the average iROAS from $1.23 to negative $0.14. And the two approaches to calculating incremental revenue — observed sales performance versus a Bayesian structural time series model — diverged by an average of 90%.

Derek Nelson, senior director of retail media consulting at Ovative Group said: "Customers doing nothing different, media doing nothing different — just putting everything together differently, and you end up with wildly different results."

Jordan Witmer, managing director of retail media at agency Salt XC, sees this play out across his clients. "Results still reflect how audiences are selected and campaigns are delivered," he says, "especially when systems are designed to show ads to shoppers already likely to buy." That dynamic — where measurement captures correlation with purchase intent rather than genuine lift — is precisely what the Ovative-Albertsons research is trying to untangle.

Don't throw the baby out with the bathwater

The natural question, given a finding that 83% of campaigns can flip from positive to negative, is whether the industry should be using iROAS as a headline KPI at all.

Both Roche and Nelson pushed back on that conclusion. Nelson's argument is that the methodologies are testing different things — new-to-SKU, new-to-brand, new-to-category — and the need to simplify and standardize has cost the industry some important nuance. "iROAS is such a broad bucket term that it kind of catches a lot of things," he said. "The need to simplify has lost some of the nuance. That's overall a good thing, but it gets used as shorthand." His view: it's on brands to ask the questions and decide what to do with the answers.

Roche added that the responsibility doesn't sit with brands alone. "I think it's also our responsibility to educate in this space," she said. "Not every one of those brands has a powerhouse data science team who can really make sense of all this. I think that's another reason why partnering with Kellogg and partnering with Ovative on research like this — from my perspective, this makes it conceptually very accessible to folks."

Liz framed Albertsons as having a stewardship role. "Let's get to the actual truth of what's happening," she said. "Everyone wins when we sell more units."

That ethos tracks with what I've observed about Albertsons Media Collective. When I profiled Brian Monahan, the executive leading the network, for this column last year, the same thread emerged: a retailer-first philosophy where media measurement serves the core business rather than existing to post flattering numbers.

The gap that matters most

The research's implications land differently depending on where you sit. For the top-tier CPGs with dedicated data science teams, this is confirmation of something they've been wrestling with — and a useful framework for structuring conversations with their other retail media partners. Many of the largest brands already rank and stack their RMN partners using internal models, and Roche acknowledged that she's seen those models up close. Her focus: making sure Albertsons provides the transparency those models need to be properly calibrated.

But a mid-market brand selling through Albertsons probably doesn't have a data scientist on staff, let alone one who can evaluate whether their iROAS report used propensity score matching or clustering, or whether brand sales were included as a matching feature. As Roche noted, many of these brands receive a report and have no choice but to take the number at face value.

To that end, the white paper's appendix gives brands without deep analytics capability a starting point for asking the right questions: what methodology was used? Who was included in the analysis? How was the control group built? What are the known limitations?

Curiosity is the real competitive advantage

I've been reading Brené Brown's Atlas of the Heart recently, and a concept from the book came to me as I reflected on this conversation. Brown defines curiosity as recognising a gap in your knowledge and becoming emotionally invested in closing it. She calls it a vulnerable act — a choice to embrace uncertainty over the safety of "knowing."

The retail media industry has been operating in a comfort zone where familiar metrics like ROAS are treated as a settled number rather than a question to investigate. Brands receive a report, see a positive figure, and move on. Retailers deliver results without always explaining the methodology underneath. Both sides are protecting themselves from the discomfort of admitting the numbers might not mean what they think.

Roche described what genuine curiosity looks like in practice: getting data scientists from both sides of the table to sit down and, as she put it (seemingly unironically), "duke it out for about six hours" until they reach agreement on a methodology. That requires vulnerability from the retailer, whose methods are being scrutinised, and from the brand, which might learn that their first conclusions were misplaced.

Brené Brown also describes curiosity as a tool that combats perfectionism and the need for self-protection. In retail media, the self-protective instinct is strong: retailers don't want to report lower numbers, brands don't want to discover wasted spend, and agencies don't want to question the metrics their performance is judged on. I wrote about this dynamic in detail in a recent piece on why ROAS refuses to die — it's a collective action problem where everyone behaves rationally and the system stays broken.

The antidote, this research suggests, is the willingness to sit in the gap for a bit. Not to abandon iROAS, but to ask what's behind it.

What next

The white paper closes with a call to action that I think is carefully calibrated. It doesn't demand standardisation of methodology — something I've argued elsewhere may be unrealistic given the structural differences between retailers. Instead, it proposes transparency standards: requiring networks to disclose how they measure, how they construct test and control groups, what features they use, and the limitations of their approach.

As Nelson noted in our conversation, "It's more of a push for transparency than it is for a push for standardisation." Brands don't need every retailer to measure the same way. But they do need to understand what they're getting. The Skai x Stratably 2026 State of Retail Media Study reinforces this: when asked what would accelerate their retail media investment, only 15% of brands said standardisation across retailers. Better measurement came in at 52%. Brands want proof that it works more than they want everyone measuring identically.

The retailers gaining credibility in this market — and I've seen this pattern across profiles of retail media leaders at CVS, Instacart, Best Buy, Ace Hardware, Costco, and Dollar General for this column — are the ones choosing transparency. Albertsons is making a bet that showing its working, including the uncomfortable parts, builds more durable advertiser trust than posting the highest possible iROAS.

The industry doesn't need a single golden metric. It needs the willingness to look under the hood and be curious about what it finds there.